AMD’s 2016 Linux Driver Plans & GPUOpen Family of Dev Tools: Investing In Open Source

Earlier this month AMD’s Radeon Technologies Group held an event to brief the press of their plans for 2016. Part of a larger shift for RTG as they work to develop their own identity and avoid the mistakes of the past, RTG has set about being more transparent and forthcoming in their roadmap plans, offering the press and ultimately the public a high-level overview of what the group plans to accomplish in 2016. The first part of this look into RTG’s roadmap was released last week, when the company unveiled their plans for their visual technologies – DisplayPort/HDMI, FreeSync, and HDR support.

Following up on that, today RTG is unveiling the next part of their roadmap. Today’s release is focused around Linux and RTG’s developer relations strategy, with RTG’s laying out their plans to improve support on the former and to better empower developers on the latter. Both RTG’s Linux support and developer relations have suffered some from RTG’s much smaller market share and more limited resources compared to NVIDIA, and while I don’t think even RTG expects to right everything overnight, they do have a clear idea over where they have gone wrong and what are some of the things they can do to correct this.

Linux Driver Support: AMDGPU for Open & Closed Source

The story of RTG’s Linux driver support is a long one, and to put it kindly it has frequently not been a happy story. Both in the professional space and the consumer space RTG has struggled to put out solid drivers that are competitive with their Windows drivers, which has served to only further cement the highly favorable view of NVIDIA’s driver quality in the Linux market. Though I don’t expect RTG will agree with this, there has certainly been a very consistent element of their Linux driver being a second-class citizen in recent years.

To that end, RTG has been embarking on developing a new driver over the past year to serve their needs in both the consumer and professional spaces, and for this driver to be a true first-class driver. This driver, AMDGPU, was released in its earliest form back in April, but it’s only this month that RTG has finally begun discussing it with the larger (non-Linux) technical press. As such there’s not a great deal of new information here, but I do want to spend a moment highlighting RTG’s plans thus far.

AMDGPU is part of a larger effort for RTG to unify all of their Linux graphic driver needs behind a single driver. AMDGPU itself is an open source kernel space driver – the heart of a graphics driver in terms of Linux driver design – and is intended to be used for all RTG/AMD GPUs, consumer and professional. On the consumer side it replaces the previously awkward arrangement of RTG maintaining two different drivers, their open source driver and their proprietary driver, with the open source driver often suffering for it.

With AMDGPU, RTG will be producing both a fully open source and a mixed open/closed source driver, both using the AMDGPU kernel space driver as their core. The pure open source driver will fulfill the need for a fully open driver for distros that only ship open source or for users who specifically want/need all open source. Meanwhile RTG’s closed driver, the successor to the previous Catalyst/fglrx driver, will build off of AMDGPU but add RTG’s closed user mode driver components such as their (typically superior) OpenGL and multimedia runtimes.

The significant change here is that by having the RTG closed source driver based around the open source driver, the company is now only maintaining a single code base, is pushing as much as possible into open source, and that the open source driver is receiving these features far sooner than it was previously. This greatly improves the quality of life for open source driver users, but it’s also reciprocal for RTG: it’s a lot easier to keep up to date with Linux kernel changes with an open source kernel mode driver than a closed source driver, and quickly integrate improvements submitted by other developers.

This driver is also at the heart of RTG’s plans for the professional and HPC markets. At SC15 AMD announced their Boltzmann initiative to develop a CUDA source code shim and the Heterogeneous Compute Compiler for their GPUs, all of which will be built on top of their new Linux driver and the headless + HSA abilities it will be able to provide. And it’s also here where RTG is also looking to capitalize on the open source nature of the driver, giving HPC users and developers far greater access than what they’re accustomed to with NVIDIA’s closed-source driver and allowing them to see the code behind the driver that’s interpreting and executing their programs.

GPUOpen: RTG’s SDKs, Libraries, & Tools To Go Open Source

The second half of RTG’s briefing was focused on developer relations. Here the company has several needs and initiatives, ranging from countering NVIDIA and their GameWorks SDKs/libraries to developing tools to better utilize RTG’s hardware and the heterogeneous system architecture. At the same time the group is also looking to better leverage their sweep of the current generation consoles, and turn those wins into a distinct advantage within the PC space.

To that end, not unlike the RTG’s Linux efforts, the group is embarking on a new, more open direction for GPU SDK and library development. Being announced today is RTG’s GPUOpen initiative, which will combine RTG’s various SDKs and libraries under the single GPUOpen umbrella, and then take all of these components open source.

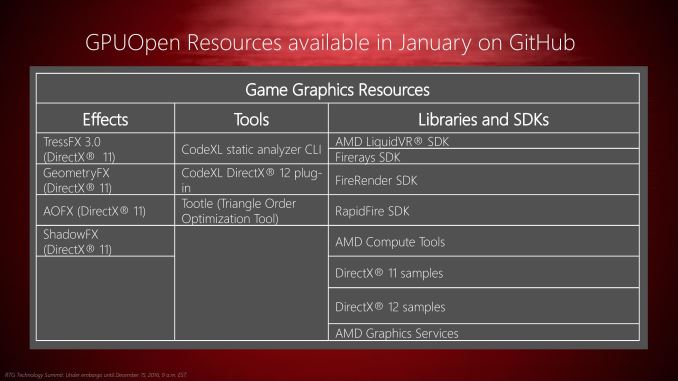

Starting first with the umbrella aspect of GPUOpen, with GPUOpen we’re going to see RTG travel down the same path of bundled tools and developer portals that NVIDIA has been following for the last couple of years with GameWorks. To NVIDIA’s credit they have been very successful in reaching developers via this method – both in terms of SDKs and in terms of branding – so it makes a great deal of sense for RTG to develop something similar. Consequently, GPUOpen will be bringing RTG’s various SDKs, tools, and libraries such as TressFX, LiquidVR, CodeXL, and their DirectX code samples underneath the GPUOpen branding umbrella. At the same time the RTG is developing a GPUOpen portal to make all of these resources available from a single location, and will also be using that portal to publish news and industry updates for game developers.

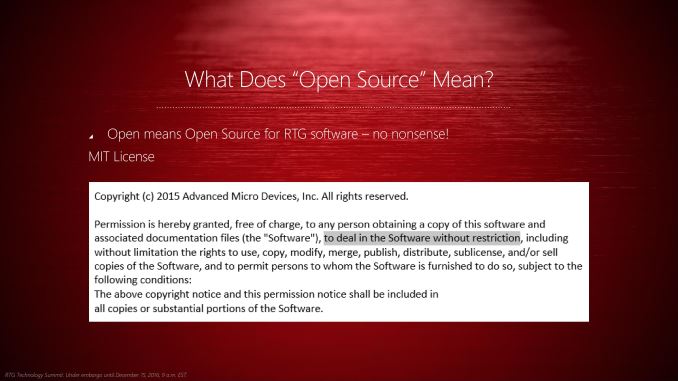

But more interesting than just bringing all of RTG’s developer resources under the GPUOpen brand is what RTG is going to do with those resources: set it free. While the group has dabbled with open source over the last several years, beginning with GPUOpen in 2016 they will be fully committed to it. Everything at the GPUOpen portal will be open source and licensed under the highly permissive MIT license, allowing developers to not only see the code behind RTG’s tools and libraries, but to integrate that code into open and closed source projects as they see fit.

Previously RTG had offered some bits and pieces of their code on an open source basis, but those projects were typically using more restrictive licenses than MIT. GPUOpen on the other hand will see the code behind these projects become available to developers under one of the most permissive licenses out there (short of public domain), which in turn allows developers to essentially do whatever they please while also avoiding any compatibility issues with other open source licenses (e.g. GPL).

Otherwise the fact that RTG is going to be placing so much of their code into the open source ecosystem is a big step for the group. Ultimately they believe that there is much to gain both from letting developers freely use this code, and from allowing them to submit their own improvements and additions back to RTG. In a sense this is the anti-GameWorks: whereas NVIDIA favors closed source libraries or limited sharing, RTG is placing everything out there in hope that their development efforts coupled with the ability for other developers to contribute via open source development will produce a better product.

Finally, as part of the GPUOpen portal and RTG’s open source efforts, the company will be hosting these projects on the ever-popular GitHub platform, allowing developers to fork the projects and submit changes as they see fit. The portal and the GitHub repositories for the initial projects will be launching in January. And though RTG didn’t offer much in the way of details for their future plans, they have strongly hinted that this is just the beginning for them, and that they are developing additional effects libraries and code samples that will be made available in the not-too-distant future. Ultimately with the first DirectX 12 games shipping next year and with Vulkan expected to be finalized in 2016 as well, I wouldn’t be surprised to see the GPUOpen portal become the focal-point of RTG’s developer relations efforts for low-level GPU programming, while further leveraging the similarities between console development and low-level PC developer.